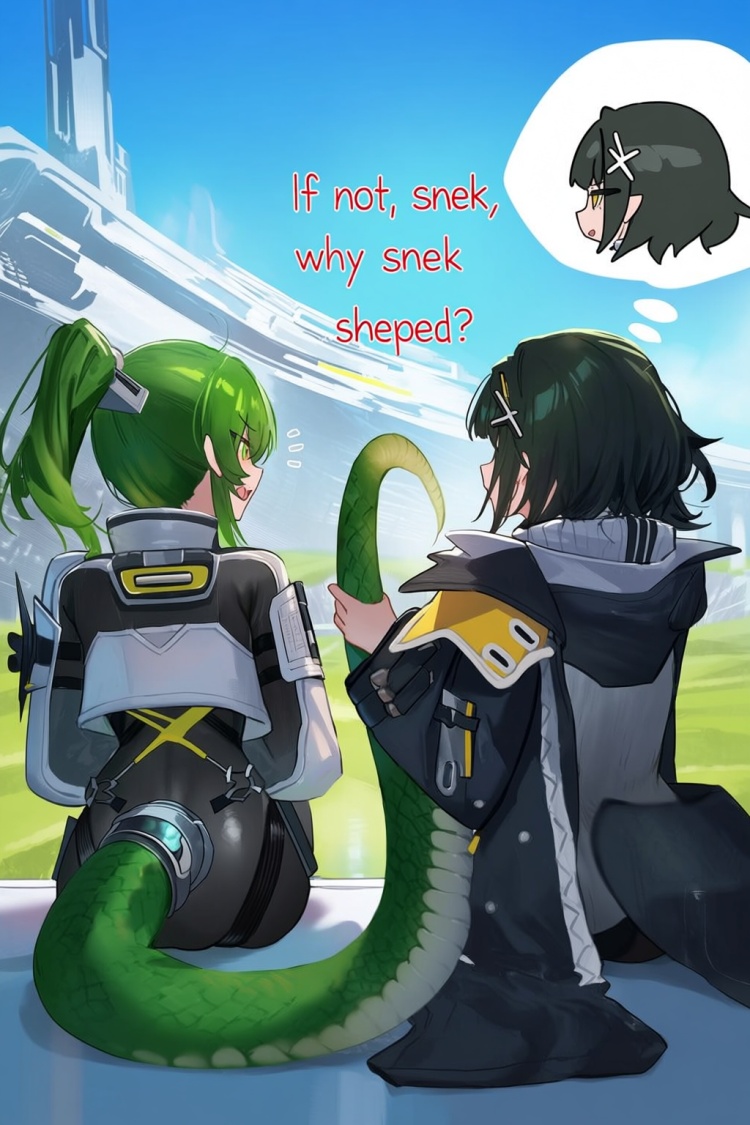

Kirazuri (Anima)

This is an experimental full finetune of the Anima Preview base.

Total training dataset of 15,420 images curated with manual human quality and aesthetic ratings from 2025/07/03 to 2026/03/19, the model should have a fairly strong recency bias and be capable of generating many characters/concepts/styles prominent from that time period.

Generation Settings

Trained in mixed resolutions for the majority of training, and finished with dedicated high resolution training. Previews are generated mostly at 1536x1024 or 1024x1536.

1280 resolutions. E.g. 1280x1280, 1536x1024, 1024x1536, etc.

1024 resolutions. E.g. 1024x1024, 896x1152, 1152x896, etc.

30-50 steps, CFG 4-5.

Same samplers as recommended for the base model work, I like to use:

er_sde: the recommended default for 30-50 steps.

sa_solver_pece: can converge with good detail in 15~20 steps.

Prompting

Like the base model, this model is trained on Danbooru-style tags, natural language captions, and combinations of tags and captions.

Tag order

[quality/meta/safety tags] [1girl/1boy/1other etc] [character] [series] [artist] [general tags]

Quality and Aesthetic tags

Human score based: masterpiece, best quality, very aesthetic, aesthetic

The very aesthetic and aesthetic tags are where this model diverges from the base, with the intent these can be used to guide the model toward a different Aesthetic. (A kind of house model bias)

Meta tags

absurdres, official art, etc

Styles

painterly, chiaroscuro, ligne claire, flat color, no lineart, blending, etc

traditional media, oil painting \(medium\), watercolor \(medium\), etc

Training Details

Samples seen(unbatched steps): ~700,000

Training time: ~85 hrs

Learning Rate: 5e-6 (General Training) and 2e-6 (Aesthetic)

Text Encoder Learning Rate: 1e-6

Effective Batch size: 24 (General Training) (1 Batch Size) and 32 (Aesthetic) (16x2 Batch Size)

Precision: Mixed BF16

Optimizer: AdamW8bit with Kahan Summation

Weight Decay: 0.01

Timestep Sampling Strategy: Logit-Normal, Shift 3

Tag Dropout: 10%

Uncond Dropout: 10%

Tag Shuffle: True with keep first 8 tags

Additional Features used:

Protected Tags

Mixed Natural Language prompts at ratio:

tags 50%, nl 10%, tags+nl 20%, nl+tags 20%

Known Limitations & Issues:

Catastrophic Forgetting

Any of the base model knowledge outside of that dataset will have significant forgetting, and LoRA trained on the base model are not expected to function very well with this finetune.

Concept Bleeding

Some character/outfit details and concept bleeding is noticeable when using short prompts.

Longer tag strings and natural language prompts describing appearance should help somewhat with this.

Intent for future training is to find the right balance to converge faster on new data while preserving more of the existing knowledge.

Recognitions

Thanks to Circlestone Labs for the Anima Preview base model.

Thanks to tdrussell of Circlestone Labs for the diffusion-pipe trainer.

Thanks to bluvoll for support using their fork diffusion-pipe.

Thanks to narugo1992 and the deepghs team for open-sourcing various training sets, image processing tools, and models.

License

This model is released under the same license as the base model.

See the base model for details of the CircleStone Labs Non-Commercial License.